Loughborough University physicists have developed a device that can process data that changes over time directly in hardware, rather than relying on software running on conventional computers.

Their latest research, published in Advanced Intelligent Systems, suggests the approach could be up to around 2000 times more energy efficient than conventional software-based methods.

“This is exciting because it shows we can rethink how AI systems are built,” said Senior Lecturer in Physics, Dr Pavel Borisov, who led the research team funded by the Engineering and Physical Sciences Research Council (EPSRC).

“By using physical processes instead of relying entirely on software, we can dramatically reduce the energy needed for these kinds of tasks.”

How it works

Many real-world AI problems involve processing data that changes over time and identifying patterns to predict what happens next – for example in weather systems, biological processes or sensor data. A technique called reservoir computing is often used for this, where incoming data is transformed into a form that makes patterns easier to detect and predict, typically using software.

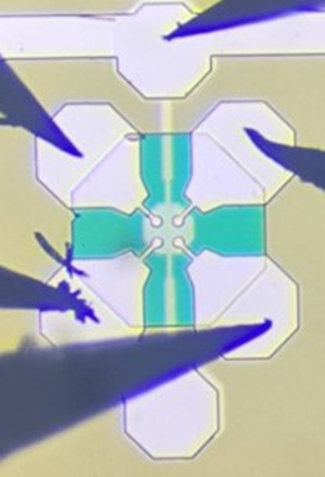

The Loughborough device performs this type of computation directly in hardware. It is a type of memristor – an electronic component that can store information about past inputs – made of nanoporous oxide. It contains random nanopores that create multiple electrical pathways, and these pathways act like the hidden processing layer of a neural network, allowing the material itself to carry out part of the computation.

Study findings

In their study, the researchers showed that the device can process time-dependent data and, when its output is fed into a linear computer model, can be used to identify patterns and make short-term predictions.

They tested the system using the Lorenz-63 system – a well-known mathematical model of chaos linked to the “butterfly effect”, where small changes can lead to very different outcomes – as well as tasks including recognising simple pixelated images of numbers and performing basic logic operations.

In these tests, the model was able to use the memristor-processed data to successfully predict the short-term behaviour of the chaotic Lorenz system and reconstruct missing data. It also correctly identified the pixelated numbers and carried out basic logic operations, showing that the same device can support a range of different tasks.

The researchers say this approach could help address a growing challenge in AI. As systems become more powerful, their energy demands are increasing, raising concerns about long-term sustainability. By shifting computation from software into hardware, it may be possible to achieve similar results using far less energy.

“Inspired by the way the human brain forms very numerous and seemingly random neuronal connections between all its neurons, we created complex, random, physical connections in an artificial neural network by designing pores in nanometre-thin films of niobium oxide as part of a novel electronic device,” said Dr Borisov.

“We showed how one can predict the future evolution of a complex time series using these devices at two thousand-times lower energy consumption compared to a standard software-based solution.”

Next steps

The researchers say the system is still at an early stage, with tests carried out on relatively simple tasks. Further work is needed to scale up the technology, increase the complexity of the networks and assess how it performs with noisier, real-world data.

“The next steps are to increase the complexity of the neural networks and to conduct tests with input data that include much more signal noise,” Dr Borisov said, “We believe this is a scalable and practical approach to creating small, industry-compatible devices for AI applications with much better energy efficiency and offline capabilities.”

Professor Sergey Saveliev, an expert in theoretical physics and one of the study’s authors, added: “This is a great example of how fundamental physics can contribute to modern computations, avoiding huge computational overheads by using the complexity of physical systems as a high dimensional filter for data.”